PROJECT DETAILS

AgriDrone Vision Evaluation Pipeline

**AgriDrone Vision Evaluation Pipeline** is a generalized and anonymized technical case study of an applied computer vision and geospatial machine learning system for agricultural drone imagery.

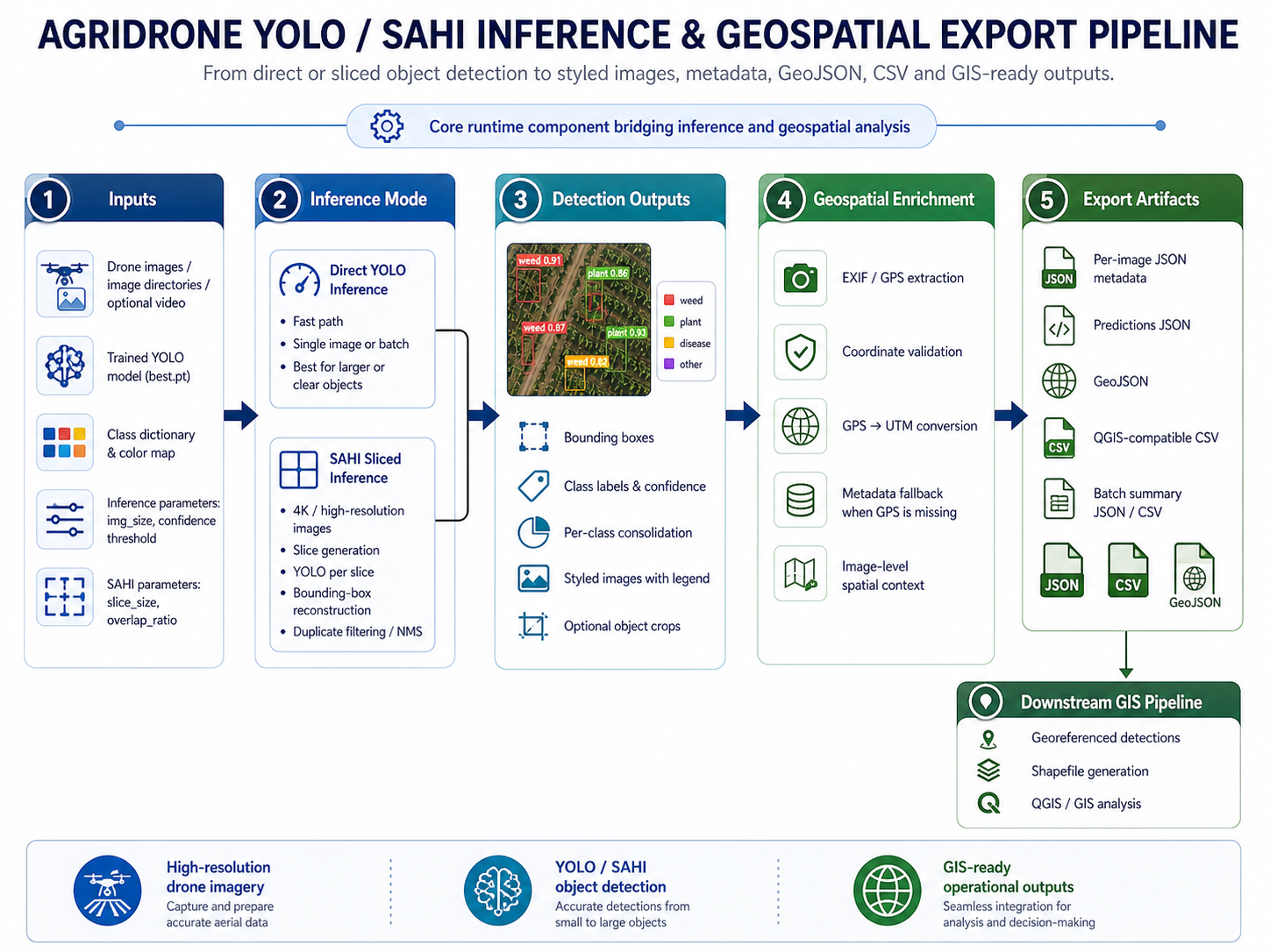

The project documents the design of an end-to-end ML engineering workflow capable of processing high-resolution UAV images and videos, running object detection models, validating model performance, generating reproducible evaluation artifacts, enriching detections with geospatial metadata, and exporting results into formats suitable for GIS analysis, technical reporting, and downstream agricultural decision-support workflows.

At its core, the system focuses on applying **YOLOv8 / YOLOv11** object detection models to agricultural imagery captured by drones. The pipeline supports both direct YOLO inference and **SAHI-based sliced inference**, which is especially useful for high-resolution images where small objects may be lost during resizing. SAHI allows the image to be divided into overlapping slices, processed independently, and reconstructed into full-image detections, improving the ability to detect small or dense objects in aerial scenes.

The documented workflow includes several stages of the machine learning lifecycle: dataset configuration, model training orchestration, validation, benchmarking, automatic best-model selection, inference, post-processing, evaluation, visualization, geospatial export, and technical documentation. It is not presented as a simple model-training notebook, but as a broader ML engineering system that connects experimentation, inference, evaluation, and geospatial analysis.

The training and validation layer describes how YOLO models can be trained and evaluated across different configurations such as model version, image size, batch size, confidence threshold, device selection, and dataset structure. It also documents model-selection logic based on experiment metrics, allowing the system to identify the best available `best.pt` checkpoint from previous runs. The validation and benchmarking workflow includes global metrics, per-class metrics, inference timing, GPU-aware execution considerations, and reproducible artifact generation.

The evaluation layer includes COCO-style evaluation concepts, conversion of YOLO annotations and predictions into COCO-compatible structures, and the calculation of metrics such as AP50, AP50:95, precision, recall, and F1-score. The documentation distinguishes between YOLO-native validation metrics, COCO-style evaluation metrics, and operational inference outputs, making clear that each type of metric answers a different question about model performance.

The inference layer supports processing individual images, full directories of images, high-resolution drone imagery through SAHI, and video inputs. For static imagery, the system generates styled images with bounding boxes, class labels, confidence scores, per-image JSON metadata, batch summaries, and geospatial outputs. For video, the system documents a dedicated video inference and object tracking processor that uses YOLO tracking IDs to count unique objects across frames, render annotated videos, generate JSON summaries, and optionally produce frame-level SRT artifacts.

A major part of the project is the integration between computer vision and geospatial processing. The system does not stop at object detection. It enriches detections with EXIF/GPS metadata when available, converts latitude and longitude into UTM coordinates, and prepares structured spatial outputs for GIS workflows. These outputs may include GeoJSON, CSV, Shapefiles, QGIS-compatible summaries, and spatial metadata records. This allows model predictions to be inspected, analyzed, and visualized in geospatial tools instead of remaining isolated as pixel-space bounding boxes.

The project also documents a raster georeferencing workflow for styled detection images. This includes copying EXIF/XMP metadata from original drone images, generating JGW world files for styled JPEG outputs, documenting CRS assumptions, supporting optional GeoTIFF fallback, and enabling QGIS-oriented raster loading workflows. This raster-oriented layer is separate from vector outputs like GeoJSON and Shapefiles, and it addresses the practical need to visually overlay annotated detection imagery inside GIS tools.

The video processing component introduces a different type of complexity because video inference is not simply image inference repeated over frames. It includes temporal state, frame decoding, YOLO `model.track()` execution, extraction of bounding boxes and tracking IDs, unique object counting, rendering of overlays, video writing, SRT generation, frame-level error handling, and resource finalization. The documentation captures risks such as tracker ID instability, missing `box.id` values, RGB/BGR color-space issues, JSON serialization problems, and performance bottlenecks caused by frame-by-frame processing.

The system also documents important engineering constraints and trade-offs. These include SAHI runtime overhead, GPU/CUDA memory pressure, cuDNN runtime assumptions, filesystem-based experiment lineage, path fragility, lack of formal retry logic, absence of background workers, output idempotency concerns, metadata quality issues, CRS ambiguity, and the difference between a research-grade batch pipeline and a fully production-ready platform. These limitations are intentionally documented to show realistic engineering judgment rather than presenting the system as a finished enterprise product.

From a software architecture perspective, the project is organized around several conceptual services and processing layers: CLI orchestration, model selection, training, validation, benchmarking, inference, SAHI reconstruction, video tracking, geospatial enrichment, raster georeferencing, GIS export, reporting, and documentation rendering. The current public version describes these as generalized architectural components rather than publishing proprietary source code or institutional implementation details.

The repository is intended to demonstrate applied ML engineering capabilities across multiple domains: computer vision, object detection, high-resolution image processing, geospatial metadata handling, GIS integration, video analytics, evaluation methodology, GPU-aware workflows, and technical documentation. It shows how a machine learning pipeline can be extended beyond model inference into reproducible evaluation, spatial intelligence, reporting, and practical analysis workflows.

This public repository is a sanitized portfolio version. It does not include private datasets, source code from proprietary systems, trained model weights, real drone imagery, real field coordinates, real shapefiles, real GeoJSON outputs, production credentials, institutional or client names, unpublished experimental results, or confidential operational details. The documentation is presented as a generalized technical case study focused on architecture, methodology, engineering decisions, and system design patterns.

Framework: AI / Computer Vision

Technology Stack:

Python, PyTorch, Ultralytics YOLOv8/YOLOv11, SAHI, OpenCV, Pillow, NumPy, Pandas, pycocotools, ClearML, CUDA, cuDNN, EXIF/GPS metadata processing, UTM coordinate conversion, GeoJSON, Shapefile, JGW world files, GeoTIFF, QGIS, PyQGIS, GDAL/OGR, Markdown documentation

About Team

Company / Institution: Anonymized Agricultural Computer Vision R&D Case Study

Developers Team: Marco Parra

Project Images